Why Ethical AI Development Matters Right Now

Ethical AI development is the practice of building and deploying AI systems that are fair, transparent, accountable, and safe.

Here is a quick snapshot of what it involves:

| Principle | What It Means in Practice |

|---|---|

| Fairness | Reduce bias in training data and model outputs |

| Transparency | Make AI decisions explainable to users and overseers |

| Accountability | Assign clear responsibility for AI outcomes |

| Privacy | Protect user data with consent, encryption, and minimization |

| Sustainability | Reduce the environmental footprint of AI systems |

| Human oversight | Keep humans involved in high-stakes decisions |

As AI reshapes industries, the stakes keep rising. AI systems are only as trustworthy as the data, governance, and decision-making behind them.

Recent developments show why this matters now. Many organizations have already adopted formal AI principles, global institutions have established ethical frameworks, and regulators are moving toward stricter oversight. What was once a niche discussion is now a board-level priority.

For large companies especially, ethical AI development also supports strong enterprise SEO performance. Clear governance, transparent documentation, trustworthy content, and responsible data practices all strengthen search visibility over time. In practical terms, organizations that communicate how their AI works, where human oversight exists, and how risk is managed are better positioned to publish authoritative content that performs well in search.

Enterprise SEO strategies that tend to work best include:

- Publishing clear, expert-led educational content tied to real business use cases

- Building topic clusters around governance, compliance, implementation, and risk

- Using consistent internal linking across related service and insight pages

- Maintaining strong content quality controls and review workflows

- Prioritizing trust signals such as author expertise, citations, and transparent policies

I’m Chris Robino, a digital strategy and AI expert with over two decades of experience helping companies navigate AI-driven transformation, including the responsible application of emerging technologies. In this guide, I’ll walk you through the essentials of ethical AI development and how to approach it with clarity.

Terms related to ethical AI development:

- business agility consulting

- business transformation consultant

- senior business transformation consultant

Core Principles and Challenges of Ethical AI Development

When we talk about ethical AI development, we aren’t just talking about a “vibe” or a set of fuzzy suggestions. We are talking about a multidisciplinary field that ensures technology respects human values. In our work at Chris Robino, we’ve seen that the most successful organizations treat ethics as a fundamental requirement rather than an optional extra.

The core principles of ethical AI development typically center on five pillars:

- Transparency and Explainability: Can we explain how the AI reached a specific conclusion? If an AI denies a loan or flags a medical issue, the “black box” approach isn’t good enough. We need to trace the reasoning.

- Fairness and Non-discrimination: We must ensure that AI doesn’t amplify existing societal biases. This means treating all individuals fairly and avoiding prejudices in training data.

- Accountability: When an AI system makes a mistake, who is responsible? We need clear frameworks that assign responsibility to developers, organizations, and regulators.

- Privacy and Data Protection: AI thrives on data, but that data must be sourced ethically, stored securely, and used only with informed consent.

- Sustainability: We have to consider the physical impact. Training large models consumes massive amounts of energy and water.

Mitigating Bias and Risks in Ethical AI Development

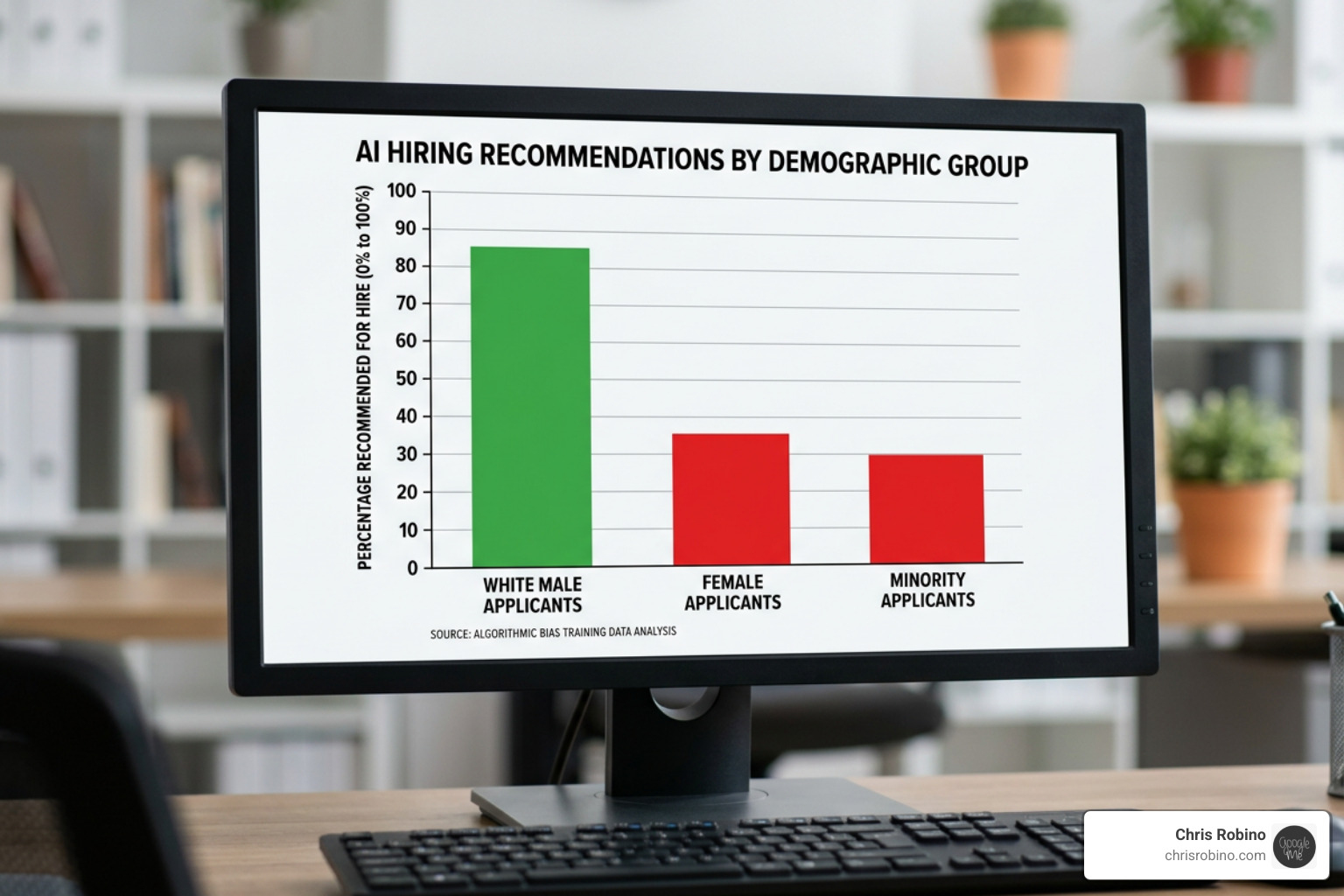

One of the greatest hurdles we face is the “garbage in, garbage out” problem. If training data reflects historical prejudices, the AI will simply automate those prejudices. This is often categorized into two types of harms:

| Harm Type | Definition | Example |

|---|---|---|

| Representational Harm | Reinforcing stereotypes or demeaning certain groups. | An image generator that only shows light-skinned men when asked for “CEO.” |

| Allocational Harm | Unfairly distributing resources or opportunities. | A recruiting tool that downgrades resumes containing the word “women’s.” |

The UNESCO Recommendation on the Ethics of AI, adopted by 193 member states, emphasizes a human-centered approach to combat these risks. Beyond bias, we also face the rise of deepfakes and misinformation. These tools can be weaponized for political disinformation or to damage personal reputations.

Furthermore, the threat of job displacement is real. As mentioned, we could see a massive shift in white-collar employment. This makes “pro-worker AI” a critical part of the ethical conversation—using AI to augment human capabilities rather than simply replacing them.

Global Standards and Regulatory Frameworks

We are currently in a “Wild West” phase of AI, but the sheriff is coming to town. Different regions are taking different approaches to governance:

- The EU AI Act: This is the first comprehensive regulation of its kind. It uses a risk-based approach. Applications deemed “high risk” (like those used in healthcare, law enforcement, or education) are subject to much stricter scrutiny. You can read more about the EU AI Act to understand how it classifies tools.

- OECD AI Principles: The OECD AI Principles focus on international coordination to maximize benefits while distributing them fairly.

- National Approaches: Countries like Singapore and Canada have published their own model governance frameworks, emphasizing human-centric AI.

- ISO/IEC 23894: This international standard provides a framework for managing risks related to AI, helping organizations integrate ethical considerations into their existing risk management processes.

Environmental Sustainability and Future Concerns

We cannot ignore the “Carbon in the Cloud.” The environmental impact of AI is a growing concern. Training a single large language model can emit as much carbon as five cars over their entire lifetimes.

To manage this, we recommend using tools like CodeCarbon, which integrates into your codebase to estimate the CO2 produced by your computing resources. Beyond carbon, AI data centers are notorious for high water consumption for cooling.

Looking further ahead, the debate over superintelligence looms. While some experts believe we are far from AI that surpasses human creativity, others argue that long-term safety research must start now. We also see emerging technologies like Quantum AI and Blockchain being used to enhance transparency and create immutable audit trails for AI decisions.

Implementing Ethical AI Development in Your Organization

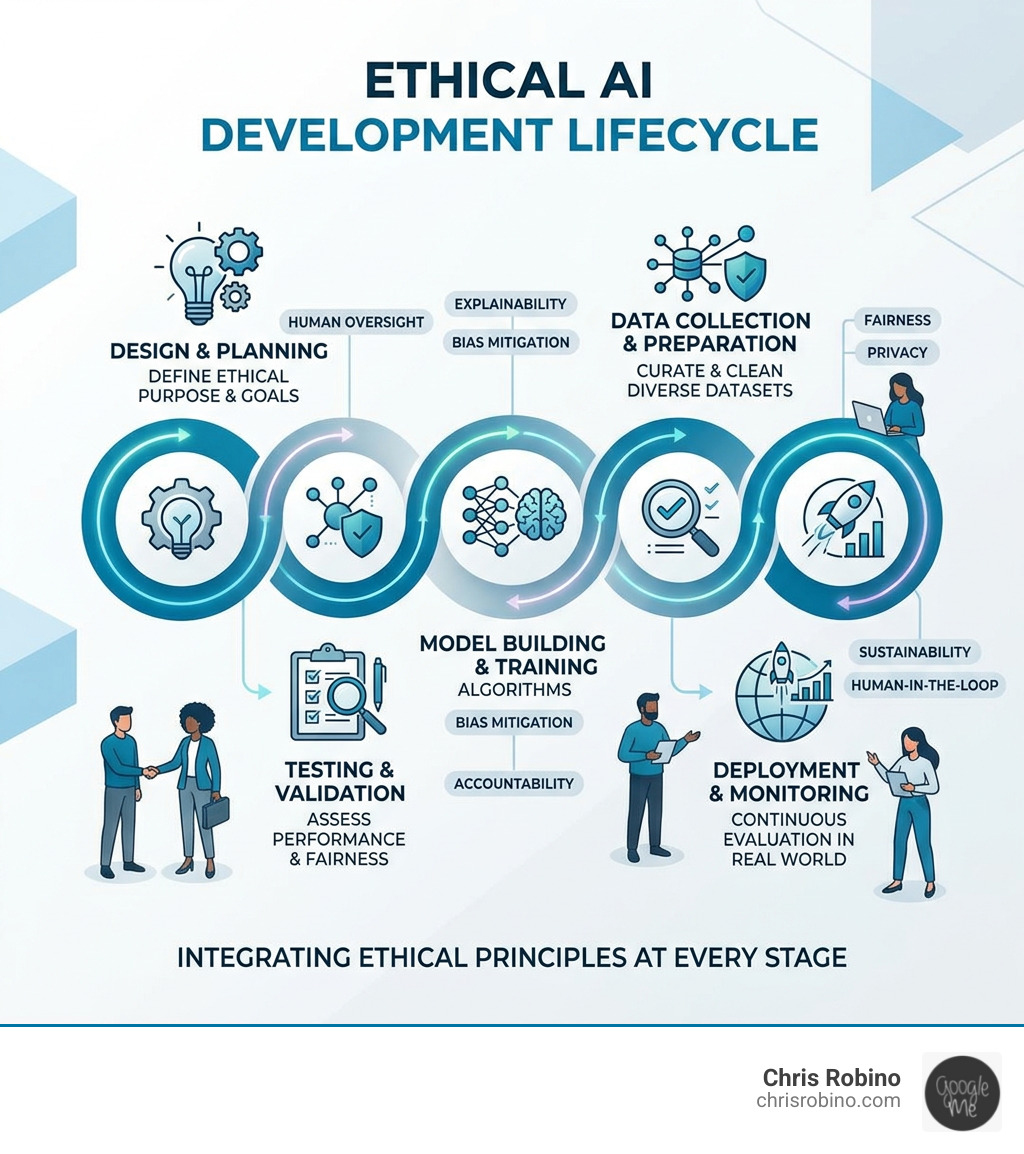

Implementation is where the “rubber meets the road.” We believe in a “shift left” mentality—integrating ethics into the very beginning of the design phase rather than trying to fix a biased model after it has already been deployed.

A Step-by-Step Guide to Ethical AI Development

If you are looking to build ethical AI, we suggest this practical roadmap:

- Define the Purpose and Scope: Ask if AI is even necessary for the task. Sometimes, a simpler, lower-risk non-AI method is more effective.

- Ethical Data Sourcing: Ensure you have the rights to use the data. Use Data minimisation—only collect what is strictly necessary for the project.

- Bias Auditing: Use “golden datasets” to test your model against known fairness benchmarks. If you find your data is skewed, oversample underrepresented groups to balance the scales.

- Transparency Artifacts: Use Model cards to document how your model works, its limitations, and its intended use cases. This makes your work auditable by third parties.

- Human-in-the-Loop: For high-stakes decisions (like medical diagnostics or hiring), always ensure a human makes the final call.

- Continuous Monitoring: AI models can “drift” over time as they encounter new data. Regular audits are required to ensure the system remains fair and accurate post-deployment.

The Role of Startups and Corporate Governance

Startups actually have a unique advantage here. Because they are often building from scratch, they can embed these principles into their DNA. Statistics show that startups with institutional funding or those already impacted by GDPR are significantly more likely to adopt ethical AI principles.

Larger organizations can look to established industry leaders for inspiration. Many major technology firms have established dedicated ethics committees and “Responsible Use” frameworks to guide their decision-making.

Why bother? Beyond the moral imperative, ethical AI is a competitive advantage. Clients and users are increasingly wary of biased or invasive technology. Organizations that can prove their systems are trustworthy will win the long-term race for market share and talent.

Conclusion: Balancing Innovation with Responsibility

At Chris Robino, we believe that the future belongs to those who can be both bold and responsible. We don’t have to choose between cutting-edge innovation and societal well-being. In fact, the two are deeply intertwined.

Ethical literacy is becoming a mandatory skill for leaders in the AI age. By staying aware of where risks exist and proactively building safeguards, we can harness the power of AI to solve humanity’s biggest challenges—from climate change to healthcare—without sacrificing our values.

Getting AI governance right is one of the most consequential challenges of our time. It requires collaboration between governments, companies, and academics. If you are ready to take the next step in your journey, we are here to help you Accelerate Digital Transformation while keeping ethics at the core of your strategy.

The era of “move fast and break things” is over for AI. The new mantra is “move fast and build trust.” Let’s build something we can all be proud of.